thought experiments / 2023-03-30

A busy week: copyrightability, throwbacks to late-90s monopoly concerns, who is a coder anyway, permission culture revisited, artistic typography, and so much more.

I was going to open this week with an essay on the commons, open, and ML, but I’m on vacation so … next week :)

Events

(All streaming unless otherwise noted)

- In person (other than me!): The Washington Journal of Law, Technology & Arts 2023 Symposium on Artificial Intelligence and Art, 4pm on April 14th at the U. Washington School of Law. I’ll be speaking on the tension between AI and the lone-genius model of creation. If you’re near UW, you can RSVP.

Values

In this section: what values have helped define open? are we seeing them in ML?

Lowers barriers to technical participation

- Cheaper may be worse? This section of the newsletter has often focused on getting high-performance models to run on low-performance hardware, which was a key prerequisite to the growth of open source in the late-90s/early-00s. So I’d be remiss to note that there is suggestive evidence that the Alpaca technique I mentioned previously may enable running a model on lower-end hardware but may also make the model less safe.

- Distributed training I’m old enough to remember when compiling big programs was too much for one computer, so we created hacks to split compilation across clusters. Everything old is new again, so here’s distributed training on commodity hardware.

- SaaS as open competitor: It’s been clear for a while that one key way that SaaS “competes” with traditional on-premise open software is that it is perhaps even better than traditional open at lowering barriers to participation. This will be true in ML as well. Last week NVIDIA announced that it will provide “foundation models as a service”, giving some of the benefits of open foundation models (ease of training, customizability) without the openness.

Shifts power

- Copyrightability: IP law allocates power, so whether or not generative AI outputs are copyrightable is (among other things) a power question. Here’s another attempt to make such outputs copyrightable, by emphasizing all the human inputs to the model.

- Old monopolies are new again: One of the key wins for antitrust law in the modern computing age was Microsoft’s decision to port Office to OS X, at a time when Apple’s future was still very uncertain. Apple feared, and everyone else should have feared, Microsoft’s ability to make or break OS X by creating, or denying, Word compatibility. Here (paywalled) Ben Thompson argues that integration of ChatGPT with Office should re-create this “appropriate fear” of Microsoft, and particularly an ML-empowered Office, as a kingmaker. Thompson calls this a problem for Silicon Valley, but I think it’s also clear that it’s a challenge for various opens.

Techniques

In this section: open software defined, and was defined by, new techniques in software development. What parallels are happening in ML?

Deep collaboration

Redefining who a ‘coder’ is

I have usually assumed that “increasing human collaboration” and “lowering barriers to participation” was about barriers to participation in open source (like, say, participating in your operating system’s development) but of course open source has also lowered barriers to creation of other software, by making infrastructure components no-cost and easily accessible. You still needed to be able to code, though!

This article persuasively argues that by lowering barriers to having coding skills code-oriented LLMs like Copilot and Replit will create a vast new wave of software creation. I have no idea what this means for “traditional” open source software but it bears watching.

Related to the previous point, to define APIs for use by OpenAI, you use… English. (example, with bonus use of RFC 2119.) OpenAI figures out the rest for you; some amazing examples here. On the API consumption side, Zapier is now touting that all the APIs it exposes can be manipulated with plain English as well.

Neither of these are open per se, but they seem to be poised to enable a giant wave of participation in software development by anyone who can instruct a computer in plain English.

Availability as an aid to collaboration

Collaboration in open software is built in part on the core assumption that “the author can’t change their mind and take this code away from me”. That encourages people to invest their time in a given code base—which encourages formation of community. We haven’t seen a recall of an ML model yet (though I think that’s coming), but OpenAI has disabled a model that more than 100 research papers were written on. This is not a new problem, exactly, but it highlights that collaboration is aided (note: does not require!) long-term commitments.

Model improvement

- ”Training Data Attribution” We’re getting a growing body of research around understanding the impact of specific pieces of training data, including attribution. So be aware that “well, LLMs can’t do attribution” is mostly true now but may not be for long.

- Interestingly, old models may benefit from new training techniques. It’ll be interesting to see if this has legs as a way of more cheaply producing state-of-the-art models.

- Reliability by layering: Most ML model invocation relies on open source stacks, since the “secret sauce” is typically considered to be the model. This allows interesting hacks: in this one, by inserting another layer, the author can exclude outputs that don’t conform to specific tests—making the output more reliable.

Instilling norms

Permission culture, revisited: One of the more ironic turns of classical open was that it nominally rebelled against “I have to ask permission to do anything”, but by slapping legal terms on everything it ended up further enshrining the notion that we have to have perfect permission to do anything with IP—what Lessig called “permission culture”. Adobe’s new Firefly image generation tool claims to be trained only on licensed images. Putting aside whether or not that is accurate, the discussion around it does seem to take for granted that creators must have permanent, iron-clad control over their creations—which isn’t what early open advocated for.

Transparency-as-technique

One of the big hype points around GPT-4 was its high test scores on various standardized tests. Of course, because OpenAIO would not tell us what the training data was, we had to take OpenAI’s word for it that GPT-4 wasn’t trained on the test answers.

Perhaps not surprisingly, it turns out that these test scores were probably somewhat fake. Since there is no transparency, we instead have to rely on “red teaming”, essentially testing the AI to understand what it was trained on. Looks like it was trained on at least some programming tests it later claimed to ace:

I suspect GPT-4's performance is influenced by data contamination, at least on Codeforces.

— Horace He (@cHHillee) March 14, 2023

Of the easiest problems on Codeforces, it solved 10/10 pre-2021 problems and 0/10 recent problems.

This strongly points to contamination.

1/4 https://t.co/wKtkyDRGGG pic.twitter.com/wm6yP6AmGx

More detail on this problem (and related ones) in this good essay.

Joys

In this section: open is at its best when it is joyful. ML has both joy, and darker counter-currents—let’s discuss them.

Humane

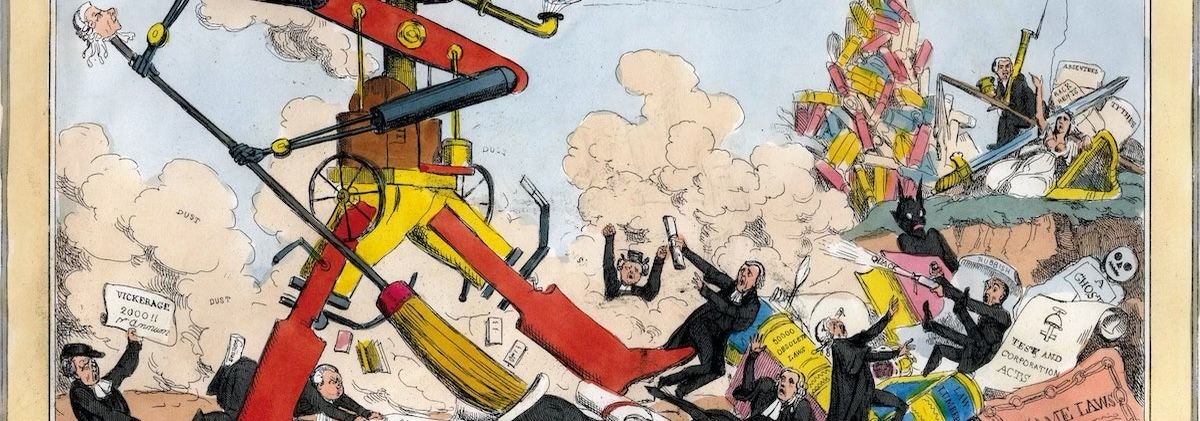

Part of being “humane”/human is a knowledge of our shared human history. So I love this collection from the Public Domain Review of how ‘20s (1820s!) Britain saw the march of technological progress at that time.

Similarly, one could lose hours in this class syllabus on how AI-created images interact with art history.

Pointless and fun anyway

New category this week, because I was reminded by a few things that open joy is often pointless fun. :)

- Artistic Typography—letters that are silly, good fun.

- "Stable Diffusion battles”, with remixing of a base image via SD

- I love this little example of using ChatGPT to write Python to … invoke more ChatGPT.

Changes

In this section: ML is going to change open—not just how we understand it, but how we practice it.

Creating new things

There’s a lot to be skeptical about in this paper on the disruptiveness of ML, but it gave me a useful label — “general purpose technology” — for techs like the printing press, steam power, etc., that change vast swathes of the economy. Expect to see that term here more often.

That said, we’re also clearly in a bubble—lots of this first wave of startups is going to fail because they’re using the new tech Just Because rather than to solve an actual problem. Here’s the FT on that.

Ethically-focused practitioners

Related to the previous point, while I do think we’re seeing a lot of deep, important ethical work in this space, we’re also going to see some orgs jumping in Because Ethics, rather than because they have an actually useful perspective.

Mozilla has come in with $30M, but their initial launch announcement is extremely vague. I hope there is more substance to come.

Changing regulatory landscape

- Open as in open letter: There’s an open letter calling for a pause on large model experiments. There’s already critique of the content, but putting that aside, I’m unsure how something like this can interact with the twin accelerants of vast profits and openly-shared knowledge, even if it is obviously correct. This is absolutely my California Ideology showing through, and I don’t like it, but short of Congress acting tomorrow and sending the FBI the next day, I don’t see how this snowball can be stopped.

- DMCA as regulatory tool: Facebook is using the DMCA to take down derivatives of its LLaMA model. I don’t necessarily think this is a bad thing, but it does highlight one way that “open(ish)” can be distinctly, strictly worse than “open” if you’re trying to build on top of it. Or to put it another way: when Facebook says something is open, read the fine print.

Collaborative tooling

Early open software did not fully anticipate how, in the 2020s, our gigantic software stacks would require extensive documentation of where software came from. Similarly, early American data collection did not anticipate the GDPR. Both spaces (with SBOM and various GDPR-related PII tracking) had to work very hard to retrofit data provenance regimes into their daily work flows.

Emily Bender pithily captures the same problem in the ML space with “too big to document is too big to deploy”—building on a comment by Margaret Mitchell that many people seem to think that it’s (1) possible to solve AGI but (2) not possible to track data sources.

Misc.

- We should, at the least, be able to train on most of the old content of all the museums of the world. But (hard fact) scanning is expensive and (cultural reality that could be changed) 1/2 of museums think they can claim copyright in 400-year-old works they didn’t create.

- I keep saying that ML will be most powerful first in domains where you can vet the ML’s output for accuracy, but I did not expect vets (veterinary medicine) to be one of those domains. Interesting story from the owner of a sick dog.

- The Data Transfer Initiative is a group (funded by several big players) for furthering personal data export from those same big players. It is not about ML per se, but interesting to note that they’re getting more serious by hiring an Executive Director (Chris Riley, ex-Moz among other things). I mention it here because I suspect “using personal data to train personal ML models” is going to become a thing.

Closing note

Two thought experiments to end the note on:

- What if a great method of censorship-resistant communication turns out to be … distributing models?

- What happens when you can tell an ML “rewrite this entire subsystem in Rust”? Or when you can feed an ML a log of an API’s inputs and outputs and tell it to re-implement?

Still feeling pretty good about "this is going to be the printing press".

Discussion